|

|

RepeaterBots |

The new RepeaterBot site, which describes a different approach, is now here.

The Goal

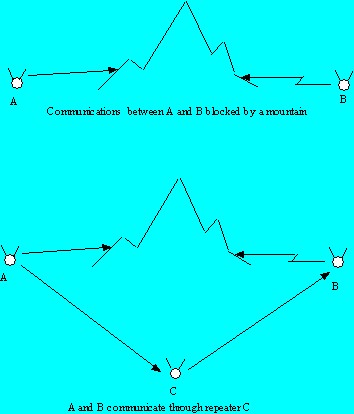

The goal of the RepeaterBots project is to construct controllers for simulated robots that carry VHF repeaters into unexplored terrain. The robots' mission is to explore a new area and provide full radio coverage via the repeaters they carry.VHF and UHF radios are limited to a large degree to line-of-site communications. To provide communications in a hilly region, radio repeaters can be used to pick up a signal from some source A and retransmit it to a receiver B that is located in some area blocked from receiving signals directly from A. Repeaters are typically placed on a mountain or tower; however, a repeater can be placed near the end of a ridge to provide communications to and from radios on both sides of the ridge as illustrated below.

Using autonomous robots to place repeaters would be useful in a number of applications. For example, robots exploring the landscape of a distant planet could share information and strategy over their radio links. In hilly terrain, any particular robot might not be able to hear all the other robots due to signal blockage. However, intelligent robots could position themselves to facilitate exploring while still maintaining a wide coverage, repeater-based network.

Q-Learning Approach

The general problem is difficult, so a simpler version of the problem

was defined called the 2 beacon, 2 repeater problem. In this problem,

there are two fixed beacons and two mobile robots carrying radio

repeaters. The current incarnation of this problem is laid out on

a 30 x 30 grid with beacons at coordinates (0,0) and (0,29), and the

mobile robots start at coordinates (2,2). The beacon at (0,29) is

blocked from the other beacon and the mobile robots by obstacles

The mobile robots must explore the terrain and place themselves

so that messages can be passed between all the beacons and all the

robots either through line-of-site communications or by using one of

the robots as a repeater.My initial attempt to solve this problem uses Q-learning, a reinforcement learning technique¹. The robots can sense their immediate surrounding and know which beacons and robots they can hear. Based on this input from the environment, the robots can internally generate a reward for each action they take. The robots are trained on three mazes, and then attempt to solve the repeater placement problem for the three training mazes and two new mazes.

Although convergence is difficult in this setup (see Tuning Q-Learning Parameters with a Genetic Algorithm²), the Q-learning approach did partially succeed. With careful tuning of learning and reward parameters, the robots consistently converged to a solution for the three training mazes. Only occasionally did the trained robots solve one or both of the additional two mazes.

See RepeaterBot Q-Learning Notes³ for more details on the approach. Evolution of Meta-parameters in Reinforcement Learning 4 is a master's thesis on using a GA to evolve q-learning parameters.

Click here

to view solutions to all mazes from one RepeaterBot run.

to view solutions to all mazes from one RepeaterBot run.The algorithm was able to solve three or more mazes 80% of the time. (With different tuning, a 90% rate was attained but with less probability of finding a solution to all five mazes.) Solutions to four mazes were found about 25% of the time, and all mazes were solved about 10% of the time. Tuning also affected the average number of trails needed to solve the mazes.

Analysis of the Approach

The preliminary approach to the repeater placement problem demonstrates

that Q-leanring is applicable in some simple problems. There are

two primary problems with this approach. First, the robot

training/reward system is not guaranteed to be an Markov Decision

Process (MDP) in general, so the robot learning might not converge in

many situations. Second, the trained robots do not generalize

their exploring/repeater placement

skills well. Training over a wide variety of mazes might improve

robot

performance if the training converges, but it is not possible to train

the

robots for all situations that might encounter in an unknown

environment.The 2 beacon, 2 robot problem is a simple version of the full repeater placement problem. In a real environment, one can envision terrain that would require many robots. The simple version of the problem does not address a reward system that would work in the general case.

The simple approach does not use robot cooperation. But, in a system where robots can communicate over their radio links, valuable information could be passed among robots that would potentially help with repeater placement and exploration.

Another Approach

In the preliminary approach, robots can determine if they or other

robots had explored a square. In the simulation, the mechanism

used to determine previous exploration is not relevant. However,

with real robots, several approaches could be used. One approach

would be for each robot to memorize its progress by tracking its own

direction and distance or by using GPS.

A robot could track other robots in the repeater chain if they

broadcasted

their coordinates each time they moved. But, from an artificial life

point

of view, any such tracking mechanism is similar to a pheromone trail

used

by insects such as ants.By using this analogy to ants, trail-following techniques could be used by robots to build repeater chains in a manner that ants use to return food to their nest. Robots losing repeater contact could retrace their trails until they were again in repeater range of other robots and then transmit their coordinates and path to recruit other robots to follow them. Or, groups of robots could explore a path together. When radio communications with other sections of a maze were lost, one of the robots could retrace their trail to try to reinstate communications with other robots. Or, a more strict ant-like simulation could be used where the robots explore and return to their "nest". The trails of all the exploring robots could be used to construct a map of the terrain that could be used to plan repeater placement. But, in the case of RepeaterBots, returning to the "nest" is inefficient as long as location information can be broadcast to other robots.

There are a number of challenges associated with this approach to the repeater placement problem. First, trail retracing will disrupt exploration until more robots can be enlisted to provide intermediate communications. In the case where more robots are needed to provide communications than are available, some mediation is needed between the goals of exploring and communicating with other robots.

Second, because there are multiple robots moving independently, trail retracing might not immediately restore full radio coverage. So, there is a challenge to build robust controllers that can handle situations where communications are lost indefinitely or where other robots are lost due to mechanical or environmental conditions.

An appealing feature of the artificial life approach to the repeater placement problem is that it should be easily applicable to a large number of exploring robots because they can have relatively simple controllers that cause the robots to explore and follow "pheromone" trails. I believe this approach will scale well as the number of robots and the size of the area to be explored increase.

The ALIFE version of the RepeaterBot system is currently under development.

Footnotes

¹ Sutton, R. and Barto, A. (1998). Reinforcement Learning. MIT Press, Cambridge, MA.

² Tuning Q-Learning Parameters with a Genetic Algorithm

³ RepeaterBot Q-Learning Notes

4 Eriksson, A. 2002. Evolution of Meta-parameters in Reinforcement Learning. Master's Thesis.

| This site © copyright 2004 by

Ben E. Cline. Contact info: |

|

8/2004 |